- 生产环境中,建议使用版本大于5的Kubernetes版本,如

1.26.7 - 系统:Rocky 9.3

- 内核:5.14.0-362.8.1.el9_3.x86_64

- K8S:1.26.11

- containerd: v1.6.21

- calico: 1.24

集群环境

部署三主两从

| hostname | IP/VIP | software |

|---|---|---|

| k8s-master01 | 192.168.77.91 | etcd、apiserver、scheduler、controller-manager、kube-proxy、kubelet |

| k8s-master02 | 192.168.77.92 | etcd、apiserver、scheduler、controller-manager、kube-proxy、kubelet |

| k8s-master03 | 192.168.77.93 | etcd、apiserver、scheduler、controller-manager、kube-proxy、kubelet |

| k8s-node01 | 192.168.77.94 | kube-proxy、kubelet |

| k8s-node02 | 192.168.77.95 | kube-proxy、kubelet |

| haproxy01 | 192.168.77.101 | haproxy、keepalived |

| haproxy02 | 192.168.77.102 | haproxy、keepalived |

| VIP | 192.168.77.100 | IPVS |

基本环境配置

设置静态IP

查看网卡

nmcli connection show设置静态 IP 地址、子网掩码、网关和 DNS 服务器:

sudo nmcli connection modify ens192 ipv4.addresses 192.168.77.91/24

sudo nmcli connection modify ens192 ipv4.gateway 192.168.77.1

sudo nmcli connection modify ens192 ipv4.dns 223.5.5.5

sudo nmcli connection modify ens192 ipv4.method manual配置文件保存路径/etc/NetworkManager/system-connections/ens192.nmconnection

激活连接:

sudo nmcli connection up ens192主机安全设置

# 关闭防火墙

systemctl disable --now firewalld

iptables -F && iptables -X && iptables -F -t nat && iptables -X -t nat

iptables -P FORWARD ACCEPT

# disable selinux

setenforce 0

sed -i 's/^SELINUX=.*/SELINUX=disabled/' /etc/selinux/config

# 禁用swap分区

swapoff -a

sed -i '/ swap / s/^\(.*\)$/#\1/g' /etc/fstab如果开启了swap分区,kubelet会启动失败(可以通过设置参数--fail-swap-on设置为false) 不建议

配置sudo 免密码

Master01配置免密码登录其他节点

Rocky 9.0的root账号默认禁用root ssh登陆,这里使用ssh用户sudo权限操作

visudo 配置wheel组免密码

visudo取消第110行注释

## Same thing without a password

%wheel ALL=(ALL) NOPASSWD: ALL将普通用户加入wheel 注意需退出终端重新登陆免密码才会生效

usermod -G wheel sunday分发公钥

这里假设所有服务器的ssh用户sunday 密码为123456,请根据需求自行更改

yum install -y expect

ssh-keygen -t rsa -P "" -f /root/.ssh/id_rsa

export user=sunday

export pass=123456

host=(k8s-master01 k8s-master02 k8s-master03 k8s-node01 k8s-node02)

for host in ${host[@]};do expect -c "

spawn ssh-copy-id -i /root/.ssh/id_rsa.pub $user@$host

expect {

\"*yes/no*\" {send \"yes\r\"; exp_continue}

\"*password*\" {send \"$pass\r\"; exp_continue}

\"*Password*\" {send \"$pass\r\";}

}";

done配置ssh连接免确定

dnf install -y sshpass

user=sunday

host=(k8s-master01 k8s-master02 k8s-master03 k8s-node01 k8s-node02)

for host in ${host[@]};do

sshpass -e ssh-copy-id -o StrictHostKeyChecking=no $user@$host

done修改主机名

hostnamectl set-hostname k8s-master01

bash # 生效修改hosts

cat >> /etc/hosts <<EOF

192.168.77.91 k8s-master01

192.168.77.92 k8s-master02

192.168.77.93 k8s-master03

192.168.77.94 k8s-node01

192.168.77.95 k8s-node02

192.168.77.100 apiserver.sundayhk.com

192.168.77.101 haproxy01

192.168.77.102 haproxy02

EOF批量设置其他服务器主机名

host=(k8s-master01 k8s-master02 k8s-master03 k8s-node01 k8s-node02)

for i in ${host[@]};do ssh sunday@$i sudo hostnamectl set-hostname $i;done阿里云repo源

sed -e 's|^mirrorlist=|#mirrorlist=|g' -e 's|^#baseurl=http://dl.rockylinux.org/$contentdir|baseurl=https://mirrors.aliyun.com/rockylinux|g' -i.bak /etc/yum.repos.d/rocky-*.repo

dnf makecache安装基本工具

dnf install -y wget tree curl bash-completion jq vim net-tools telnet git lrzsz epel-release rsync时间同步

Rocky9.0默认安装chrony服务并且服务已经启动,如果需要修改NTP服务器可以修改配置文件/etc/chrony.conf

timedatectl set-timezone Asia/Shanghai

timedatectl set-local-rtc 0ntp="ntp.aliyun.com"

lan="192.168.77.0/24"

sed -i "s@^pool .*@pool $ntp iburst@" /etc/chrony.conf

sed -i "s@^#allow .*@allow $lan@" /etc/chrony.conf

# dnf install chrony -y

systemctl restart chronyd

# 使用客户端进行验证

chronyc sources -v其他服务器chrony ntp指向这个服务器(k8s-master01)

ntp="192.168.77.91"

sed -i "s@^pool .*@pool $ntp iburst@" /etc/chrony.conf

systemctl restart chronyd

chronyc sources -v

...

^? 192.168.77.91 0 6 0 - +0ns[ +0ns] +/- 0ns配置limits

cat >> /etc/security/limits.conf <<EOF

* soft nofile 655360

* hard nofile 131072

* soft nproc 655350

* hard nproc 655350

* soft memlock unlimited

* hard memlock unlimited

EOF

echo "ulimit -SHn 65535" >> /etc/profile

source /etc/profile内核参数

k8s集群中必须的内核参数,所有节点配置k8s内核

cat <<EOF > /etc/sysctl.d/k8s.conf

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

fs.may_detach_mounts = 1

vm.swappiness = 0

vm.overcommit_memory=1

vm.panic_on_oom=0

vm.max_map_count=655360

fs.inotify.max_user_watches=89100

fs.file-max=52706963

fs.nr_open=52706963

net.netfilter.nf_conntrack_max=2310720

net.ipv4.tcp_keepalive_time = 600

net.ipv4.tcp_keepalive_probes = 3

net.ipv4.tcp_keepalive_intvl =15

net.ipv4.tcp_max_tw_buckets = 36000

net.ipv4.tcp_tw_reuse = 1

net.ipv4.tcp_max_orphans = 327680

net.ipv4.tcp_orphan_retries = 3

net.ipv4.tcp_syncookies = 1

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.ip_conntrack_max = 65536

net.ipv4.tcp_timestamps = 0

net.core.somaxconn = 16384

EOF

sysctl --system升级内核

Rocky9.3 内核够新了 不用升级

$ uname -r

5.14.0-362.8.1.el9_3.x86_64基础组件

主要安装的是集群中用到的各种组件,比如IPVS、Kubernetes各组件等

安装ipvs

dnf install -y ipvsadm ipset sysstat conntrack libseccomp所有节点配置ipvs模块,在内核4.19+版本nf_conntrack_ipv4已经改为nf_conntrack, 4.18以下使用nf_conntrack_ipv4即可

cat > /etc/modules-load.d/ipvs.conf << EOF

ip_vs

ip_vs_rr

ip_vs_wrr

ip_vs_sh

nf_conntrack

ip_tables

ip_set

xt_set

ipt_set

ipt_rpfilter

ipt_REJECT

ipip

EOF

systemctl restart systemd-modules-load.service检查是否加载

lsmod | grep -E "ip_vs | nf_conntrack"安装containerd

配置containerd及cni插件

wget https://github.com/containerd/containerd/releases/download/v1.6.21/cri-containerd-cni-1.6.21-linux-amd64.tar.gz# 下载runc

wget -O /usr/local/bin/runc https://github.com/opencontainers/runc/releases/download/v1.1.10/runc.amd64

chmod +x /usr/local/bin/runcmkdir -p /etc/cni/net.d /opt/cni/bin

tar -C / -xvzf cri-containerd-cni-1.6.21-linux-amd64.tar.gzmv /etc/cni/net.d/10-containerd-net.conflist /etc/cni/net.d/10-containerd-net.conflist.bak配置containerd所需的模块

cat > /etc/modules-load.d/containerd.conf <<EOF

overlay

br_netfilter

EOF

systemctl restart systemd-modules-load.service配置containerd所需的内核

cat > /etc/sysctl.d/99-kubernetes-cri.conf << EOF

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-ip6tables = 1

EOF

sysctl --system配置containerd

mkdir /etc/containerd -p

containerd config default > /etc/containerd/config.toml

sed -i 's/registry.k8s.io/registry.aliyuncs.com\/google_containers/' /etc/containerd/config.toml

sed -i 's/SystemdCgroup \= false/SystemdCgroup \= true/' /etc/containerd/config.toml

#sed -i 's/snapshotter = "overlayfs"/snapshotter = "native"/' /etc/containerd/config.tomlcontainerd service

cat > /etc/systemd/system/containerd.service <<EOF

[Unit]

Description=containerd container runtime

Documentation=https://containerd.io

After=network.target local-fs.target

[Service]

ExecStartPre=-/sbin/modprobe overlay

ExecStart=/usr/local/bin/containerd

Type=notify

Delegate=yes

KillMode=process

Restart=always

RestartSec=5

LimitNPROC=infinity

LimitCORE=infinity

LimitNOFILE=infinity

TasksMax=infinity

OOMScoreAdjust=-999

[Install]

WantedBy=multi-user.target

EOFsystemctl daemon-reload

systemctl enable --now containerd

systemctl status containerd分发containerd二进制

host=(k8s-master02 k8s-master03 k8s-node01 k8s-node02)

user=sunday

for host in ${host[@]}; do \

ssh $user@$host "sudo mkdir -p /etc/containerd /opt/cni/bin /opt/containerd /etc/cni/net.d"; \

rsync --rsync-path="sudo rsync" /usr/local/bin/container* /usr/local/bin/{crictl,critest,ctd-decoder,ctr,nerdctl,runc}$user@$host:/usr/local/bin/; \

rsync --rsync-path="sudo rsync" /opt/cni/bin/* $user@$host:/opt/cni/bin/; \

rsync --rsync-path="sudo rsync" /etc/systemd/system/containerd.service $user@$host:/etc/systemd/system/; \

rsync --rsync-path="sudo rsync" /etc/containerd/config.toml $user@$host:/etc/containerd/; \

rsync --rsync-path="sudo rsync" /etc/modules-load.d/containerd.conf $user@$host:/etc/modules-load.d/; \

rsync --rsync-path="sudo rsync" /etc/sysctl.d/99-kubernetes-cri.conf $user@$host:/etc/sysctl.d/;

done启动containerd

host=(k8s-master02 k8s-master03 k8s-node01 k8s-node02)

user=sunday

for host in ${host[@]}; do \

ssh $user@$host "systemctl daemon-reload;systemctl enable --now containerd";

done测试containerd

$ ctr ns list

NAME LABELS

此时为空

# 创建ns

ctr ns create test

$ ctr ns list

NAME LABELS

default

test

# 测试拉取busybox镜像,使用ctr拉取,镜像的路径要写全,没有指明ns,默认保存在default命名空间

ctr images pull docker.io/library/busybox:latest

# 查看镜像,test命名空间没有镜像

$ ctr -n test images list

REF TYPE DIGEST SIZE PLATFORMS LABELS

REF TYPE DIGEST SIZE PLATFORMS LABELS

docker.io/library/busybox:latest application/vnd.docker.distribution.manifest.list.v2+json sha256:1ceb872bcc68a8fcd34c97952658b58086affdcb604c90c1dee2735bde5edc2f 2.1 MiB linux/386,linux/amd64,linux/arm/v5,linux/arm/v6,linux/arm/v7,linux/arm64/v8,linux/mips64le,linux/ppc64le,linux/riscv64,linux/s390x -下载etcd二进制

wget https://github.com/etcd-io/etcd/releases/download/v3.5.9/etcd-v3.5.9-linux-amd64.tar.gz解压安装到/usr/local/bin/目录

tar -xf etcd-v3.5.9-linux-amd64.tar.gz --strip-components=1 -C /usr/local/bin etcd-v3.5.9-linux-amd64/etcd{,ctl}masters='k8s-master02 k8s-master03'

user=sunday

for i in $masters; do

echo $i;

rsync --rsync-path="sudo rsync" /usr/local/bin/etcd* $user@$i:/usr/local/bin/;

doneetcdctl version下载kubernetes二进制

https://github.com/kubernetes/kubernetes/blob/master/CHANGELOG/CHANGELOG-1.26.md

wget https://storage.googleapis.com/kubernetes-release/release/v1.26.11/kubernetes-server-linux-amd64.tar.gz解压安装到/usr/local/bin/目录

tar -xf kubernetes-server-linux-amd64.tar.gz --strip-components=3 -C /usr/local/bin kubernetes/server/bin/kube{let,ctl,-apiserver,-controller-manager,-scheduler,-proxy}分发master组件

masters='k8s-master02 k8s-master03'

user=sunday

for i in $masters; do

echo $i;

rsync --rsync-path="sudo rsync" /usr/local/bin/kube{let,ctl,-apiserver,-controller-manager,-scheduler,-proxy} $user@$i:/usr/local/bin/;

done分发node组件

nodes='k8s-node01 k8s-node02'

user=sunday

for i in $nodes; do

rsync --rsync-path="sudo rsync" /usr/local/bin/kube{let,-proxy} $user@$i:/usr/local/bin/;

done检查版本及是否正常

ls -l /usr/local/bin

kubelet --version下载cfssl工具

wget https://github.com/cloudflare/cfssl/releases/download/v1.6.1/cfssl_1.6.1_linux_amd64 -O /usr/local/bin/cfssl

wget https://github.com/cloudflare/cfssl/releases/download/v1.6.1/cfssljson_1.6.1_linux_amd64 -O /usr/local/bin/cfssljson

wget https://github.com/cloudflare/cfssl/releases/download/v1.6.1/cfssl-certinfo_1.6.1_linux_amd64 -O /usr/local/bin/cfssl-certinfo

# 添加执行权限

chmod +x /usr/local/bin/cfssl*生成证书

生成etcd证书

Master节点创建etcd证书存放目录

mkdir -p /opt/pki/etcdetcd ca证书

cd /opt/pki/etcd

cat > ca-config.json << EOF

{

"signing": {

"default": {

"expiry": "876000h"

},

"profiles": {

"peer": {

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

],

"expiry": "876000h"

}

}

}

}

EOFetcd的ca证书签名请求文件

cat > etcd-ca-csr.json << EOF

{

"CN": "etcd",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Guangdong",

"L": "Guangzhou",

"O": "Etcd",

"OU": "Etcd Security"

}

],

"ca": {

"expiry": "876000h"

}

}

EOF生成etcd集群使用的证书申请签名文件

cat > etcd-csr.json << EOF

{

"CN": "etcd",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Guangdong",

"L": "Guangzhou",

"O": "etcd",

"OU": "Etcd Security"

}

]

}

EOF生成etcd集群使用的ca根证书

cfssl gencert -initca etcd-ca-csr.json | cfssljson -bare /opt/pki/etcd/etcd-ca生成etcd证书和key

cfssl gencert \

-ca=/opt/pki/etcd/etcd-ca.pem \

-ca-key=/opt/pki/etcd/etcd-ca-key.pem \

-config=/opt/pki/etcd/ca-config.json \

-hostname=k8s-master01,k8s-master02,k8s-master03,192.168.77.91,192.168.77.92,192.168.77.93 \

-profile=peer etcd-csr.json | cfssljson -bare /opt/pki/etcd/etcd# ls -l

total 36

-rw-r--r--. 1 root root 288 Dec 10 11:58 ca-config.json

-rw-r--r--. 1 root root 1054 Dec 10 12:01 etcd-ca.csr

-rw-r--r--. 1 root root 253 Dec 10 12:01 etcd-ca-csr.json

-rw-------. 1 root root 1679 Dec 10 12:01 etcd-ca-key.pem

-rw-r--r--. 1 root root 1330 Dec 10 12:01 etcd-ca.pem

-rw-r--r--. 1 root root 1127 Dec 10 12:13 etcd.csr

-rw-r--r--. 1 root root 214 Dec 10 12:04 etcd-csr.json

-rw-------. 1 root root 1679 Dec 10 12:13 etcd-key.pem

-rw-r--r--. 1 root root 1509 Dec 10 12:13 etcd.pem同步证书到其他master节点

user=sunday

for i in k8s-master01 k8s-master02 k8s-master03;do

ssh $user@$i "sudo mkdir -p /etc/etcd/ssl"

rsync --rsync-path="sudo rsync" /opt/pki/etcd/etcd{,-key,-ca}.pem $user@$i:/etc/etcd/ssl/

done生成kubernetes证书

kubernetes ca证书

master01节点创建kubernetes 证书存放目录

mkdir -p /opt/pki/k8s

cd /opt/pki/k8scat > ca-config.json << EOF

{

"signing": {

"default": {

"expiry": "876000h"

},

"profiles": {

"kubernetes": {

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

],

"expiry": "876000h"

}

}

}

}

EOF生成CA证书签名请求的配置文件

cat > ca-csr.json << EOF

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Guangdong",

"L": "Guangzhou",

"O": "Kubernetes",

"OU": "System"

}

],

"ca": {

"expiry": "876000h"

}

}

EOF生成ca证书和私钥

cfssl gencert -initca ca-csr.json | cfssljson -bare /opt/pki/k8s/caapiserver证书

使用自签CA签发kube-apiserver Https证书

创建kube-apiserver证书签名请求文件

cat > apiserver-csr.json << EOF

{

"CN": "kube-apiserver",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Guangdong",

"L": "Guangzhou",

"O": "Kubernetes",

"OU": "System"

}

]

}

EOF生成apiserver证书和密钥

cfssl gencert \

-ca=/opt/pki/k8s/ca.pem \

-ca-key=/opt/pki/k8s/ca-key.pem \

-config=/opt/pki/k8s/ca-config.json \

-hostname=10.96.0.1,127.0.0.1,kubernetes,kubernetes.default,kubernetes.default.svc,kubernetes.default.svc.cluster,kubernetes.default.svc.cluster.local,192.168.77.90,192.168.77.91,192.168.77.92,192.168.77.93,192.168.77.94,192.168.77.95,192.168.77.96,192.168.77.97,192.168.77.98,192.168.77.99,192.168.77.100 \

-profile=kubernetes apiserver-csr.json | cfssljson -bare /opt/pki/k8s/apiserver注:上述文件hosts字段中IP为所有Master/LB/VIP IP,一个都不能少!为了方便后期扩容可以多写几个预留的IP, 为将来添加node做准备

10.96.0.1是service网段的第一个地址,需要计算,192.168.77.100为高可用vip地址

apiserver聚合证书

访问kube-apiserver的另一种方式就是使用kube-proxy来代理访问, 而该证书就是用来支持SSL代理访问的。在该种访问模式下,我们是以http的方式发起请求到代理服务的, 此时, 代理服务会将该请求发送给kube-apiserver, 在此之前, 代理会将发送给kube-apiserver的请求头里加入证书信息。

客户端 -- 发起请求 ---> 代理 -- Add Header信息:发起请求 --> kube-apiserve如果apiserver所在的主机上没有运行kube-proxy,既无法通过服务的ClusterIP进行访问,需要 --enable-aggregator-routing=true

生成ca签名请求文件

cat > front-proxy-ca-csr.json << EOF

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"ca": {

"expiry": "876000h"

}

}

EOF生成front-proxy-client证书请求文件

cat > front-proxy-client-csr.json << EOF

{

"CN": "front-proxy-client",

"key": {

"algo": "rsa",

"size": 2048

}

}

EOF此根证书用在requestheader-client-ca-file配置选项中, kube-apiserver使用该证书来验证客户端证书是否为自己所签发

cfssl gencert -initca front-proxy-ca-csr.json | cfssljson -bare /opt/pki/k8s/front-proxy-ca生成代理层证书,代理端使用此证书,用来代用户向kube-apiserver认证

cfssl gencert \

-ca=/opt/pki/k8s/front-proxy-ca.pem \

-ca-key=/opt/pki/k8s/front-proxy-ca-key.pem \

-config=/opt/pki/k8s/ca-config.json \

-profile=kubernetes front-proxy-client-csr.json | cfssljson -bare /opt/pki/k8s/front-proxy-clientcontroller-manager证书

cat > controller-manager-csr.json << EOF

{

"CN": "system:kube-controller-manager",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Guangdong",

"L": "Guangzhou",

"O": "system:kube-controller-manager",

"OU": "System"

}

]

}

EOFcfssl gencert \

-ca=/opt/pki/k8s/ca.pem \

-ca-key=/opt/pki/k8s/ca-key.pem \

-config=/opt/pki/k8s/ca-config.json \

-profile=kubernetes \

controller-manager-csr.json | cfssljson -bare /opt/pki/k8s/controller-manager# 注意,如果没有使用haproxy/nginx等高可用vip, 需将192.168.77.100改为master01的地址或域名

mkdir -p /etc/kubernetes/

# 设置一个集群项

kubectl config set-cluster kubernetes \

--certificate-authority=/opt/pki/k8s/ca.pem \

--embed-certs=true \

--server=https://192.168.77.100:8443 \

--kubeconfig=/etc/kubernetes/controller-manager.kubeconfig

# 设置一个环境项,一个上下文

kubectl config set-context system:kube-controller-manager@kubernetes \

--cluster=kubernetes \

--user=system:kube-controller-manager \

--kubeconfig=/etc/kubernetes/controller-manager.kubeconfig

# set-credentials 设置一个用户项

kubectl config set-credentials system:kube-controller-manager \

--client-certificate=/opt/pki/k8s/controller-manager.pem \

--client-key=/opt/pki/k8s/controller-manager-key.pem \

--embed-certs=true \

--kubeconfig=/etc/kubernetes/controller-manager.kubeconfig

# 设置默认环境

kubectl config use-context system:kube-controller-manager@kubernetes \

--kubeconfig=/etc/kubernetes/controller-manager.kubeconfigscheduler证书

cat > scheduler-csr.json << EOF

{

"CN": "system:kube-scheduler",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Guangdong",

"L": "Guangzhou",

"O": "system:kube-scheduler",

"OU": "System"

}

]

}

EOFcfssl gencert \

-ca=/opt/pki/k8s/ca.pem \

-ca-key=/opt/pki/k8s/ca-key.pem \

-config=/opt/pki/k8s/ca-config.json \

-profile=kubernetes \

scheduler-csr.json | cfssljson -bare /opt/pki/k8s/scheduler# 注意,如果不是高可用集群, 将192.168.77.100改为master01的地址或域名

kubectl config set-cluster kubernetes \

--certificate-authority=/opt/pki/k8s/ca.pem \

--embed-certs=true \

--server=https://192.168.77.100:8443 \

--kubeconfig=/etc/kubernetes/scheduler.kubeconfig

kubectl config set-credentials system:kube-scheduler \

--client-certificate=/opt/pki/k8s/scheduler.pem \

--client-key=/opt/pki/k8s/scheduler-key.pem \

--embed-certs=true \

--kubeconfig=/etc/kubernetes/scheduler.kubeconfig

kubectl config set-context system:kube-scheduler@kubernetes \

--cluster=kubernetes \

--user=system:kube-scheduler \

--kubeconfig=/etc/kubernetes/scheduler.kubeconfig

kubectl config use-context system:kube-scheduler@kubernetes \

--kubeconfig=/etc/kubernetes/scheduler.kubeconfigkube-proxy证书

cat > kube-proxy-csr.json << EOF

{

"CN": "system:kube-proxy",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Guangdong",

"L": "Guangzhou",

"O": "system:kube-proxy",

"OU": "System"

}

]

}

EOFcfssl gencert \

-ca=/opt/pki/k8s/ca.pem \

-ca-key=/opt/pki/k8s/ca-key.pem \

-config=/opt/pki/k8s/ca-config.json \

-profile=kubernetes \

kube-proxy-csr.json | cfssljson -bare /opt/pki/k8s/kube-proxykubectl config set-cluster kubernetes \

--certificate-authority=/opt/pki/k8s/ca.pem \

--embed-certs=true \

--server=https://192.168.77.100:8443 \

--kubeconfig=/etc/kubernetes/kube-proxy.kubeconfig

kubectl config set-credentials kube-proxy \

--client-certificate=/opt/pki/k8s/kube-proxy.pem \

--client-key=/opt/pki/k8s/kube-proxy-key.pem \

--embed-certs=true \

--kubeconfig=/etc/kubernetes/kube-proxy.kubeconfig

kubectl config set-context kube-proxy@kubernetes \

--cluster=kubernetes \

--user=kube-proxy \

--kubeconfig=/etc/kubernetes/kube-proxy.kubeconfig

kubectl config use-context kube-proxy@kubernetes --kubeconfig=/etc/kubernetes/kube-proxy.kubeconfigServiceAccount Key secret

创建ServiceAccount Key -- secret

serviceaccount账号的一种认证方式,创建serviceaccount的时候会创建一个与之绑定的secret,这个secret会生成 token,这组的密钥对仅提供给controller-manager使用,controller-manager通过sa.key对token进行签名, master 节点通过公钥sa.pub进行签名的验证

openssl genrsa -out /opt/pki/k8s/sa.key 2048

openssl rsa -in /opt/pki/k8s/sa.key -pubout -out /opt/pki/k8s/sa.pubadmin证书

cat > admin-csr.json << EOF

{

"CN": "admin",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Guangdong",

"L": "Guangzhou",

"O": "system:masters",

"OU": "System"

}

]

}

EOFcfssl gencert \

-ca=/opt/pki/k8s/ca.pem \

-ca-key=/opt/pki/k8s/ca-key.pem \

-config=/opt/pki/k8s/ca-config.json \

-profile=kubernetes \

admin-csr.json | cfssljson -bare /opt/pki/k8s/admin# 注意,如果不是高可用集群,将192.168.77.100改为master01的地址或域名

kubectl config set-cluster kubernetes \

--certificate-authority=/opt/pki/k8s/ca.pem \

--embed-certs=true \

--server=https://192.168.77.100:8443 \

--kubeconfig=/etc/kubernetes/admin.kubeconfig

kubectl config set-credentials kubernetes-admin \

--client-certificate=/opt/pki/k8s/admin.pem \

--client-key=/opt/pki/k8s/admin-key.pem \

--embed-certs=true \

--kubeconfig=/etc/kubernetes/admin.kubeconfig

kubectl config set-context kubernetes-admin@kubernetes \

--cluster=kubernetes \

--user=kubernetes-admin \

--kubeconfig=/etc/kubernetes/admin.kubeconfig

kubectl config use-context kubernetes-admin@kubernetes \

--kubeconfig=/etc/kubernetes/admin.kubeconfig同步至其他master

mkdir -p /etc/kubernetes/pki/

cp -r /opt/pki/k8s/*.pem /etc/kubernetes/pki/

user=sunday

for i in k8s-master02 k8s-master03;do

ssh $user@$i "sudo mkdir -p /etc/kubernetes/pki/";

rsync --rsync-path="sudo rsync" /etc/kubernetes/pki/*.pem $user@$i:/etc/kubernetes/pki/;

rsync --rsync-path="sudo rsync" /etc/kubernetes/*.kubeconfig $user@$i:/etc/kubernetes/;

done[root@k8s-master01 ~]# ls -l /etc/kubernetes/

total 36

-rw------- 1 root root 6456 Oct 18 03:06 admin.kubeconfig

-rw------- 1 root root 6594 Oct 18 20:45 controller-manager.kubeconfig

-rw------- 1 root root 6458 Oct 18 03:17 kube-proxy.kubeconfig

drwxr-xr-x 2 root root 4096 Oct 18 20:57 pki

-rw------- 1 root root 6522 Oct 18 20:45 scheduler.kubeconfig

[root@k8s-master01 pki]# ls -l /etc/kubernetes/pki/

total 72

-rw-------. 1 root root 1675 Dec 10 13:52 admin-key.pem

-rw-r--r--. 1 root root 1424 Dec 10 13:52 admin.pem

-rw-------. 1 root root 1675 Dec 10 13:52 apiserver-key.pem

-rw-r--r--. 1 root root 1732 Dec 10 13:52 apiserver.pem

-rw-------. 1 root root 1679 Dec 10 13:52 ca-key.pem

-rw-r--r--. 1 root root 1342 Dec 10 13:52 ca.pem

-rw-------. 1 root root 1679 Dec 10 13:51 controller-manager-key.pem

-rw-r--r--. 1 root root 1480 Dec 10 13:51 controller-manager.pem

-rw-------. 1 root root 1679 Dec 10 13:51 front-proxy-ca-key.pem

-rw-r--r--. 1 root root 1094 Dec 10 13:51 front-proxy-ca.pem

-rw-------. 1 root root 1675 Dec 10 13:51 front-proxy-client-key.pem

-rw-r--r--. 1 root root 1188 Dec 10 13:51 front-proxy-client.pem

-rw-------. 1 root root 1675 Dec 10 13:51 kube-proxy-key.pem

-rw-r--r--. 1 root root 1444 Dec 10 13:51 kube-proxy.pem

-rw-------. 1 root root 1704 Dec 11 00:20 sa.key

-rw-r--r--. 1 root root 451 Dec 11 00:20 sa.pub

-rw-------. 1 root root 1679 Dec 10 13:51 scheduler-key.pem

-rw-r--r--. 1 root root 1456 Dec 10 13:51 scheduler.pem

[root@k8s-master01 pki]# ls -l /etc/kubernetes/pki/ | wc -l

19部署Ectd

etcd配置大致相同,注意修改每个Master节点的etcd配置的主机名和IP地址

master01

mkdir -p /data/etcd

cat > /etc/etcd/etcd.config.yaml << EOF

name: 'k8s-master01'

data-dir: /data/etcd

wal-dir: /data/etcd/wal

snapshot-count: 5000

heartbeat-interval: 100

election-timeout: 1000

quota-backend-bytes: 0

listen-peer-urls: 'https://192.168.77.91:2380'

listen-client-urls: 'https://192.168.77.91:2379,http://127.0.0.1:2379'

max-snapshots: 3

max-wals: 5

cors:

initial-advertise-peer-urls: 'https://192.168.77.91:2380'

advertise-client-urls: 'https://192.168.77.91:2379'

discovery:

discovery-fallback: 'proxy'

discovery-proxy:

discovery-srv:

initial-cluster: 'k8s-master01=https://192.168.77.91:2380,k8s-master02=https://192.168.77.92:2380,k8s-master03=https://192.168.77.93:2380'

initial-cluster-token: 'etcd-cluster'

initial-cluster-state: 'new'

strict-reconfig-check: false

enable-v2: true

enable-pprof: true

proxy: 'off'

proxy-failure-wait: 5000

proxy-refresh-interval: 30000

proxy-dial-timeout: 1000

proxy-write-timeout: 5000

proxy-read-timeout: 0

client-transport-security:

cert-file: '/etc/etcd/ssl/etcd.pem'

key-file: '/etc/etcd/ssl/etcd-key.pem'

client-cert-auth: true

trusted-ca-file: '/etc/etcd/ssl/etcd-ca.pem'

auto-tls: true

peer-transport-security:

cert-file: '/etc/etcd/ssl/etcd.pem'

key-file: '/etc/etcd/ssl/etcd-key.pem'

peer-client-cert-auth: true

trusted-ca-file: '/etc/etcd/ssl/etcd-ca.pem'

auto-tls: true

debug: false

log-package-levels:

log-outputs: [default]

force-new-cluster: false

EOFmaster02

mkdir -p /data/etcd

cat > /etc/etcd/etcd.config.yaml << EOF

name: 'k8s-master02'

data-dir: /data/etcd

wal-dir: /data/etcd/wal

snapshot-count: 5000

heartbeat-interval: 100

election-timeout: 1000

quota-backend-bytes: 0

listen-peer-urls: 'https://192.168.77.92:2380'

listen-client-urls: 'https://192.168.77.92:2379,http://127.0.0.1:2379'

max-snapshots: 3

max-wals: 5

cors:

initial-advertise-peer-urls: 'https://192.168.77.92:2380'

advertise-client-urls: 'https://192.168.77.92:2379'

discovery:

discovery-fallback: 'proxy'

discovery-proxy:

discovery-srv:

initial-cluster: 'k8s-master01=https://192.168.77.91:2380,k8s-master02=https://192.168.77.92:2380,k8s-master03=https://192.168.77.93:2380'

initial-cluster-token: 'etcd-cluster'

initial-cluster-state: 'new'

strict-reconfig-check: false

enable-v2: true

enable-pprof: true

proxy: 'off'

proxy-failure-wait: 5000

proxy-refresh-interval: 30000

proxy-dial-timeout: 1000

proxy-write-timeout: 5000

proxy-read-timeout: 0

client-transport-security:

cert-file: '/etc/etcd/ssl/etcd.pem'

key-file: '/etc/etcd/ssl/etcd-key.pem'

client-cert-auth: true

trusted-ca-file: '/etc/etcd/ssl/etcd-ca.pem'

auto-tls: true

peer-transport-security:

cert-file: '/etc/etcd/ssl/etcd.pem'

key-file: '/etc/etcd/ssl/etcd-key.pem'

peer-client-cert-auth: true

trusted-ca-file: '/etc/etcd/ssl/etcd-ca.pem'

auto-tls: true

debug: false

log-package-levels:

log-outputs: [default]

force-new-cluster: false

EOFmaster03

mkdir -p /data/etcd

cat > /etc/etcd/etcd.config.yaml << EOF

name: 'k8s-master03'

data-dir: /data/etcd

wal-dir: /data/etcd/wal

snapshot-count: 5000

heartbeat-interval: 100

election-timeout: 1000

quota-backend-bytes: 0

listen-peer-urls: 'https://192.168.77.93:2380'

listen-client-urls: 'https://192.168.77.93:2379,http://127.0.0.1:2379'

max-snapshots: 3

max-wals: 5

cors:

initial-advertise-peer-urls: 'https://192.168.77.93:2380'

advertise-client-urls: 'https://192.168.77.93:2379'

discovery:

discovery-fallback: 'proxy'

discovery-proxy:

discovery-srv:

initial-cluster: 'k8s-master01=https://192.168.77.91:2380,k8s-master02=https://192.168.77.92:2380,k8s-master03=https://192.168.77.93:2380'

initial-cluster-token: 'etcd-cluster'

initial-cluster-state: 'new'

strict-reconfig-check: false

enable-v2: true

enable-pprof: true

proxy: 'off'

proxy-failure-wait: 5000

proxy-refresh-interval: 30000

proxy-dial-timeout: 1000

proxy-write-timeout: 5000

proxy-read-timeout: 0

client-transport-security:

cert-file: '/etc/etcd/ssl/etcd.pem'

key-file: '/etc/etcd/ssl/etcd-key.pem'

client-cert-auth: true

trusted-ca-file: '/etc/etcd/ssl/etcd-ca.pem'

auto-tls: true

peer-transport-security:

cert-file: '/etc/etcd/ssl/etcd.pem'

key-file: '/etc/etcd/ssl/etcd-key.pem'

peer-client-cert-auth: true

trusted-ca-file: '/etc/etcd/ssl/etcd-ca.pem'

auto-tls: true

debug: false

log-package-levels:

log-outputs: [default]

force-new-cluster: false

EOF创建etcd service

cat > /usr/lib/systemd/system/etcd.service << EOF

[Unit]

Description=Etcd Service

Documentation=https://coreos.com/etcd/docs/latest/

After=network.target

[Service]

Type=notify

ExecStart=/usr/local/bin/etcd --config-file=/etc/etcd/etcd.config.yaml

Restart=on-failure

RestartSec=10

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

Alias=etcd3.service

EOF启用etcd

systemctl daemon-reload

systemctl enable --now etcd查看etcd状态

export ETCDCTL_API=3

etcdctl --endpoints="192.168.77.91:2379,192.168.77.92:2379,192.168.77.93:2379" \

--cacert=/etc/etcd/ssl/etcd-ca.pem \

--cert=/etc/etcd/ssl/etcd.pem \

--key=/etc/etcd/ssl/etcd-key.pem \

endpoint status --write-out=table

etcdctl --endpoints="192.168.77.91:2379,192.168.77.92:2379,192.168.77.93:2379" \

--cacert=/etc/etcd/ssl/etcd-ca.pem \

--cert=/etc/etcd/ssl/etcd.pem \

--key=/etc/etcd/ssl/etcd-key.pem \

member list --write-out=table

etcdctl --endpoints="192.168.77.91:2379,192.168.77.92:2379,192.168.77.93:2379" \

--cacert=/etc/etcd/ssl/etcd-ca.pem \

--cert=/etc/etcd/ssl/etcd.pem \

--key=/etc/etcd/ssl/etcd-key.pem \

endpoint health --write-out=table高可用配置

haproxy

安装keepalived和haproxy·

dnf install keepalived haproxy -y所有haproxy配置HAProxy,配置一样

cat > /etc/haproxy/haproxy.cfg <<EOF

global

maxconn 2000

ulimit-n 16384

log 127.0.0.1 local0 err

stats timeout 30s

defaults

log global

mode http

option httplog

timeout connect 5000

timeout client 50000

timeout server 50000

timeout http-request 15s

timeout http-keep-alive 15s

listen monitor

bind 0.0.0.0:8100

mode http

stats enable

stats uri /

stats refresh 5s

frontend k8s-master

bind 0.0.0.0:8443

mode tcp

option tcplog

tcp-request inspect-delay 5s

default_backend k8s-master

backend k8s-master

mode tcp

option tcplog

option tcp-check

balance roundrobin

default-server inter 10s downinter 5s rise 2 fall 2 slowstart 60s maxconn 250 maxqueue 256 weight 100

server k8s-master01 192.168.77.91:6443 check

server k8s-master02 192.168.77.92:6443 check

server k8s-master03 192.168.77.93:6443 check

EOFkeepalived

keepalived01

配置KeepAlived

配置不一样,注意每个节点的IP和interface

cat > /etc/keepalived/keepalived.conf << EOF

! Configuration File for keepalived

global_defs {

router_id LVS_DEVEL

}

vrrp_script chk_apiserver {

script "/etc/keepalived/check_apiserver.sh"

interval 5

weight -5

fall 2

rise 1

}

vrrp_instance VI_1 {

state MASTER

interface ens192

mcast_src_ip 192.168.77.101

virtual_router_id 61

priority 100

nopreempt

advert_int 2

authentication {

auth_type PASS

auth_pass K8SHA_KA_AUTH

}

virtual_ipaddress {

192.168.77.100

}

track_script {

chk_apiserver

}

}

EOFkeepalived02

cat > /etc/keepalived/keepalived.conf << EOF

! Configuration File for keepalived

global_defs {

router_id LVS_DEVEL

}

vrrp_script chk_apiserver {

script "/etc/keepalived/check_apiserver.sh"

interval 5

weight -5

fall 2

rise 1

}

vrrp_instance VI_1 {

state BACKUP

interface ens192

mcast_src_ip 192.168.77.102

virtual_router_id 61

priority 90

nopreempt

advert_int 2

authentication {

auth_type PASS

auth_pass K8SHA_KA_AUTH

}

virtual_ipaddress {

192.168.77.100

}

track_script {

chk_apiserver

}

}

EOF健康检查配置

cat > /etc/keepalived/check_apiserver.sh << \EOF

#!/bin/bash

err=0

for k in $(seq 1 3)

do

check_code=$(pgrep haproxy)

if [[ $check_code == "" ]]; then

err=$(expr $err + 1)

sleep 1

continue

else

err=0

break

fi

done

if [[ $err != "0" ]]; then

echo "systemctl stop keepalived"

/usr/bin/systemctl stop keepalived

exit 1

else

exit 0

fi

EOF

chmod +x /etc/keepalived/check_apiserver.sh启动haproxy和keepalived

systemctl daemon-reload

systemctl enable --now haproxy

systemctl enable --now keepalived

systemctl restart haproxy keepalived测试高可用

ping 192.168.77.100关闭主节点, vip是否漂移到备节点

注:现在apiserver还未部署,所以不能telnet 8443端口,因为负载不到6443端口。

部署kubernetes

Master节点

注:需完成基础组件配置

所有节点创建相关目录

mkdir -p /etc/kubernetes/manifests/ /var/lib/kubelet /var/log/kubernetesapiserver

- 注意k8s service网段为10.96.0.0/12,该网段不能和宿主机的网段、Pod网段的重复

- 请按需修改

所有master节点配置kube-apiserver service

cat > /usr/lib/systemd/system/kube-apiserver.service << \EOF

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-apiserver \

--v=2 \

--allow-privileged=true \

--bind-address=0.0.0.0 \

--secure-port=6443 \

--advertise-address=192.168.77.91 \ # 本机IP ipvs 10.0.96.1:443 转发 192.168.77.91:6443 calico安装连接10.0.96.1的 需要对应后端正常的

--service-cluster-ip-range=10.96.0.0/12 \

--service-node-port-range=30000-32767 \

--etcd-servers=https://192.168.77.91:2379,https://192.168.77.92:2379,https://192.168.77.93:2379 \

--etcd-cafile=/etc/etcd/ssl/etcd-ca.pem \

--etcd-certfile=/etc/etcd/ssl/etcd.pem \

--etcd-keyfile=/etc/etcd/ssl/etcd-key.pem \

--client-ca-file=/etc/kubernetes/pki/ca.pem \

--tls-cert-file=/etc/kubernetes/pki/apiserver.pem \

--tls-private-key-file=/etc/kubernetes/pki/apiserver-key.pem \

--kubelet-client-certificate=/etc/kubernetes/pki/apiserver.pem \

--kubelet-client-key=/etc/kubernetes/pki/apiserver-key.pem \

--service-account-key-file=/etc/kubernetes/pki/sa.pub \

--service-account-signing-key-file=/etc/kubernetes/pki/sa.key \

--service-account-issuer=https://kubernetes.default.svc.cluster.local \

--kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname \

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,DefaultTolerationSeconds,NodeRestriction,ResourceQuota \

--authorization-mode=Node,RBAC \

--enable-bootstrap-token-auth=true \

--requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.pem \

--proxy-client-cert-file=/etc/kubernetes/pki/front-proxy-client.pem \

--proxy-client-key-file=/etc/kubernetes/pki/front-proxy-client-key.pem \

--requestheader-allowed-names=aggregator \

--requestheader-group-headers=X-Remote-Group \

--requestheader-extra-headers-prefix=X-Remote-Extra- \

--requestheader-username-headers=X-Remote-User \

--enable-aggregator-routing=true

# --token-auth-file=/etc/kubernetes/token.csv

Restart=on-failure

RestartSec=10s

LimitNOFILE=65535

[Install]

WantedBy=multi-user.target

EOFsystemctl daemon-reload

systemctl enable kube-apiserver

systemctl restart kube-apiserver

systemctl status kube-apiservercontroller-manager

所有Master节点配置kube-controller-manager service

cat > /usr/lib/systemd/system/kube-controller-manager.service << \EOF

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-controller-manager \

--v=2 \

--bind-address=127.0.0.1 \

--root-ca-file=/etc/kubernetes/pki/ca.pem \

--cluster-signing-cert-file=/etc/kubernetes/pki/ca.pem \

--cluster-signing-key-file=/etc/kubernetes/pki/ca-key.pem \

--service-account-private-key-file=/etc/kubernetes/pki/sa.key \

--kubeconfig=/etc/kubernetes/controller-manager.kubeconfig \

--leader-elect=true \

--use-service-account-credentials=true \

--node-monitor-grace-period=40s \

--node-monitor-period=5s \

--pod-eviction-timeout=2m0s \

--controllers=*,bootstrapsigner,tokencleaner \

--allocate-node-cidrs=true \

--service-cluster-ip-range=10.96.0.0/12 \

--cluster-cidr=172.16.0.0/12 \

--node-cidr-mask-size-ipv4=24 \

--requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.pem

Restart=always

RestartSec=10s

[Install]

WantedBy=multi-user.target

EOFsystemctl daemon-reload

systemctl enable kube-controller-manager

systemctl restart kube-controller-manager

systemctl status kube-controller-managerscheduler

所有Master节点配置kube-scheduler service

cat > /usr/lib/systemd/system/kube-scheduler.service << \EOF

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-scheduler \

--v=2 \

--bind-address=127.0.0.1 \

--leader-elect=true \

--kubeconfig=/etc/kubernetes/scheduler.kubeconfig

Restart=always

RestartSec=10s

[Install]

WantedBy=multi-user.target

EOFsystemctl daemon-reload

systemctl enable kube-scheduler

systemctl restart kube-scheduler

systemctl status kube-schedulerTLS Bootstrapping配置

在master01创建bootstrap

生成bootstrap的token

TOKEN_ID=$(openssl rand -hex 3)

TOKEN_SECRET=$(openssl rand -hex 8)

BOOTSTRAP_TOKEN=${TOKEN_ID}.${TOKEN_SECRET}

$ echo $BOOTSTRAP_TOKEN

9afe5c.07be6c9e50a9dd5# 设置集群参数

kubectl config set-cluster kubernetes \

--certificate-authority=/opt/pki/k8s/ca.pem \

--embed-certs=true --server=https://192.168.77.100:8443 \

--kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfig

# # 设置客户端认证参数

# token 需与下面的bootstrap.secret.yaml的token-id、token-secret 保持一致

kubectl config set-credentials tls-bootstrap-token-user \

--token=${BOOTSTRAP_TOKEN} \

--kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfig

# 设置context---将用户和集群关联起来

kubectl config set-context tls-bootstrap-token-user@kubernetes \

--cluster=kubernetes --user=tls-bootstrap-token-user \

--kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfig

# 设置默认contexts

kubectl config use-context tls-bootstrap-token-user@kubernetes \

--kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfig

# token的位置在bootstrap.secret.yaml,如果修改的话到这个文件修改

mkdir -p /root/.kube

cp /etc/kubernetes/admin.kubeconfig /root/.kube/config- 注意:bootstrap.secret.yaml的token-id、token-secret,需与上命令token保持一致(即

9afe5c.07be6c9e50a9dd5)

mkdir -p /etc/kubernetes/yaml

cat > /etc/kubernetes/yaml/bootstrap-secret.yaml << EOF

apiVersion: v1

kind: Secret

metadata:

name: bootstrap-token-${TOKEN_ID}

namespace: kube-system

type: bootstrap.kubernetes.io/token

stringData:

description: "The default bootstrap token generated by 'kubelet '."

token-id: ${TOKEN_ID}

token-secret: ${TOKEN_SECRET}

usage-bootstrap-authentication: "true"

usage-bootstrap-signing: "true"

auth-extra-groups: system:bootstrappers:default-node-token,system:bootstrappers:worker,system:bootstrappers:ingress

EOFcat > /etc/kubernetes/yaml/kubelet-bootstrap-rbac.yaml << EOF

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: kubelet-bootstrap

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:node-bootstrapper

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: Group

name: system:bootstrappers:default-node-token

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: node-autoapprove-bootstrap

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:certificates.k8s.io:certificatesigningrequests:nodeclient

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: Group

name: system:bootstrappers:default-node-token

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: node-autoapprove-certificate-rotation

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:certificates.k8s.io:certificatesigningrequests:selfnodeclient

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: Group

name: system:nodes

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

annotations:

rbac.authorization.kubernetes.io/autoupdate: "true"

labels:

kubernetes.io/bootstrapping: rbac-defaults

name: system:kube-apiserver-to-kubelet

rules:

- apiGroups:

- ""

resources:

- nodes/proxy

- nodes/stats

- nodes/log

- nodes/spec

- nodes/metrics

verbs:

- "*"

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: system:kube-apiserver

namespace: ""

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:kube-apiserver-to-kubelet

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: User

name: kube-apiserver

EOFkubectl create -f /etc/kubernetes/yaml/bootstrap-secret.yaml

kubectl create -f /etc/kubernetes/yaml/kubelet-bootstrap-rbac.yaml分发配置文件

注:若kubectl get node 为空,那应该就是bootstrap-kubelet.kubeconfig中的token对不上, 修改为重启kubelet

host=(k8s-master01 k8s-master02 k8s-master03 k8s-node01 k8s-node02)

user=sunday

for i in ${host[@]}; do

rsync --rsync-path="sudo rsync" /etc/kubernetes/{bootstrap-kubelet,kube-proxy}.kubeconfig $user@$i:/etc/kubernetes/;

done查看集群状态

[root@k8s-master01 system]# kubectl get cs

Warning: v1 ComponentStatus is deprecated in v1.19+

NAME STATUS MESSAGE ERROR

scheduler Healthy ok

controller-manager Healthy ok

etcd-0 Healthy {"health":"true","reason":""}

etcd-1 Healthy {"health":"true","reason":""}

etcd-2 Healthy {"health":"true","reason":""}Node节点

注:需完成基础组件配置

分发证书

Master01节点分发证书至Node节点

user=sunday

host=(k8s-master02 k8s-master03 k8s-node01 k8s-node02)

for i in ${host[@]}; do

ssh $user@$i "sudo mkdir -p /etc/kubernetes";

rsync --rsync-path="sudo rsync" /opt/pki/k8s/{ca.pem,ca-key.pem,front-proxy-ca.pem} $user@$i:/etc/kubernetes/pki/;

donekubelet配置

所有节点创建相关目录

mkdir -p /var/lib/kubelet /etc/kubernetes/manifests/配置kubelet service

cat > /usr/lib/systemd/system/kubelet.service << \EOF

[Unit]

Description=Kubernetes Kubelet

Documentation=https://github.com/kubernetes/kubernetes

After=containerd.service

Requires=containerd.service

[Service]

ExecStart=/usr/local/bin/kubelet \

--bootstrap-kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfig \

--kubeconfig=/etc/kubernetes/kubelet.kubeconfig \

--config=/etc/kubernetes/kubelet-conf.yaml \

--container-runtime=remote \

--runtime-request-timeout=15m \

--container-runtime-endpoint=unix:///run/containerd/containerd.sock \

--cgroup-driver=systemd \

--node-labels=node.kubernetes.io/node=

Restart=always

StartLimitInterval=0

RestartSec=10

[Install]

WantedBy=multi-user.target

EOF- 注意node-labels=node.kubernetes.io/node=

''ubuntu为''centos为空

创建kubelet的配置文件

注意:如果更改了k8s的service网段,需要更改kubelet-conf.yaml的clusterDNS配置,改成service网段的第十个地址,如10.96.0.10

cat > /etc/kubernetes/kubelet-conf.yaml <<EOF

apiVersion: kubelet.config.k8s.io/v1beta1

kind: KubeletConfiguration

address: 0.0.0.0

port: 10250

readOnlyPort: 10255

authentication:

anonymous:

enabled: false

webhook:

cacheTTL: 2m0s

enabled: true

x509:

clientCAFile: /etc/kubernetes/pki/ca.pem

authorization:

mode: Webhook

webhook:

cacheAuthorizedTTL: 5m0s

cacheUnauthorizedTTL: 30s

cgroupDriver: systemd

cgroupsPerQOS: true

clusterDNS:

- 10.96.0.10

clusterDomain: cluster.local

containerLogMaxFiles: 5

containerLogMaxSize: 10Mi

contentType: application/vnd.kubernetes.protobuf

cpuCFSQuota: true

cpuManagerPolicy: none

cpuManagerReconcilePeriod: 10s

enableControllerAttachDetach: true

enableDebuggingHandlers: true

enforceNodeAllocatable:

- pods

eventBurst: 10

eventRecordQPS: 5

evictionHard:

imagefs.available: 15%

memory.available: 100Mi

nodefs.available: 10%

nodefs.inodesFree: 5%

evictionPressureTransitionPeriod: 5m0s

failSwapOn: true

fileCheckFrequency: 20s

hairpinMode: promiscuous-bridge

healthzBindAddress: 127.0.0.1

healthzPort: 10248

httpCheckFrequency: 20s

imageGCHighThresholdPercent: 85

imageGCLowThresholdPercent: 80

imageMinimumGCAge: 2m0s

iptablesDropBit: 15

iptablesMasqueradeBit: 14

kubeAPIBurst: 10

kubeAPIQPS: 5

makeIPTablesUtilChains: true

maxOpenFiles: 1000000

maxPods: 110

nodeStatusUpdateFrequency: 10s

oomScoreAdj: -999

podPidsLimit: -1

registryBurst: 10

registryPullQPS: 5

resolvConf: /etc/resolv.conf

rotateCertificates: true

runtimeRequestTimeout: 2m0s

serializeImagePulls: true

staticPodPath: /etc/kubernetes/manifests

streamingConnectionIdleTimeout: 4h0m0s

syncFrequency: 1m0s

volumeStatsAggPeriod: 1m0s

EOF启动所有节点kubelet

systemctl daemon-reload

systemctl enable --now kubelet

systemctl status kubelet此时系统日志/var/log/messages

Unable to update cni config: no networks found in /etc/cni/net.d 显示只有如下信息为正常[root@k8s-master01 ~]# kubectl get node

NAME STATUS ROLES AGE VERSION

k8s-master01 NotReady <none> 14m v1.26.11

k8s-master02 NotReady <none> 2m52s v1.26.11

k8s-master03 NotReady <none> 2m51s v1.26.11

k8s-node01 NotReady <none> 4m59s v1.26.11

k8s-node02 NotReady <none> 4m55s v1.26.11kube-proxy的配置

cat > /etc/kubernetes/kube-proxy.yaml << EOF

apiVersion: kubeproxy.config.k8s.io/v1alpha1

bindAddress: 0.0.0.0

clientConnection:

acceptContentTypes: ""

burst: 10

contentType: application/vnd.kubernetes.protobuf

kubeconfig: /etc/kubernetes/kube-proxy.kubeconfig

qps: 5

clusterCIDR: 172.16.0.0/12

configSyncPeriod: 15m0s

conntrack:

max: null

maxPerCore: 32768

min: 131072

tcpCloseWaitTimeout: 1h0m0s

tcpEstablishedTimeout: 24h0m0s

enableProfiling: false

healthzBindAddress: 0.0.0.0:10256

hostnameOverride: ""

iptables:

masqueradeAll: false

masqueradeBit: 14

minSyncPeriod: 0s

syncPeriod: 30s

ipvs:

masqueradeAll: true

minSyncPeriod: 5s

scheduler: "rr"

syncPeriod: 30s

kind: KubeProxyConfiguration

metricsBindAddress: 127.0.0.1:10249

mode: "ipvs"

nodePortAddresses: null

oomScoreAdj: -999

portRange: ""

udpIdleTimeout: 250ms

EOFcat > /usr/lib/systemd/system/kube-proxy.service << \EOF

[Unit]

Description=Kubernetes Kube Proxy

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-proxy \

--config=/etc/kubernetes/kube-proxy.yaml \

--v=2

Restart=always

RestartSec=10s

[Install]

WantedBy=multi-user.target

EOF启动kube-proxy

systemctl daemon-reload

systemctl enable --now kube-proxy

systemctl status kube-proxy安装Calico

以下步骤只在master01执行

CNI插件问题:

注释掉默认containerd cni插件,避免冲突,若此目录中有多个cni配置文件,kubelet 将会使用按文件名的字典顺序排列的第一个作为配置文件,所以前面默认选择使用的是 containerd-net 这个插件。

mv /etc/cni/net.d/10-containerd-net.conflist /etc/cni/net.d/10-containerd-net.conflist.bak

ifconfig cni0 down && ip link delete cni0

systemctl daemon-reload

systemctl restart containerd kubelet# 直接etcd认证更高性能

curl https://raw.githubusercontent.com/projectcalico/calico/v3.24.5/manifests/calico-etcd.yaml -O

# https://docs.projectcalico.org/getting-started/kubernetes/installation/config-options这里以apiserver认证方式安装

wget https://docs.projectcalico.org/manifests/calico.yaml --no-check-certificate

wget --no-check-certificate https://github.com/projectcalico/calico/raw/v3.26.4/manifests/calico.yaml防止NetworkManger干扰calico

cat > /etc/NetworkManager/conf.d/calico.conf << EOF

[keyfile]

unmanaged-devices=interface-name:cali*;interface-name:tunl*;interface-name:vxlan.calico;interface-name:vxlan-v6.calico;interface-name:wireguard.cali;interface-name:wg-v6.cali

EOFLinux conntrack 表空间不足

sysctl -w net.netfilter.nf_conntrack_max=1000000

echo "net.netfilter.nf_conntrack_max=1000000" >> /etc/sysctl.conf修改pod网段

vim calico.yaml

POD_CIDR="172.16.0.0/12"

sed -i 's@# - name: CALICO_IPV4POOL_CIDR@- name: CALICO_IPV4POOL_CIDR@' calico.yaml

sed -i 's@# value: "192.168.0.0/16"@ value: '"$POD_CIDR"'@' calico.yaml

第4800行处

- name: CALICO_IPV4POOL_CIDR

value: 172.16.0.0/12

- name: IP_AUTODETECTION_METHOD

value: "interface=ens192"

# Disable file logging so `kubectl logs` works.

- name: CALICO_DISABLE_FILE_LOGGING

value: "true"```

```bash

kubectl apply -f calico.yaml</code></pre>

<p>查看calico状态</p>

<pre><code class="language-bash">[root@k8s-master01 ~]# kubectl get pod -n kube-system

NAME READY STATUS RESTARTS AGE

calico-kube-controllers-6bb4597c4f-smvmr 1/1 Running 0 2m16s

calico-node-2bmx4 1/1 Running 0 2m16s

calico-node-4jpvn 1/1 Running 0 2m16s

calico-node-7bh4b 1/1 Running 0 2m16s

calico-node-l5wch 1/1 Running 0 2m16s

calico-node-r896n 1/1 Running 0 2m16s</code></pre>

<p>calicoctl (可选)</p>

<pre><code class="language-bash">wget https://github.com/projectcalico/calico/releases/download/v3.24.2/calicoctl-linux-amd64 -O /usr/local/bin/calicoctl

chmod +x /usr/local/bin/calicoctl</code></pre>

<pre><code class="language-bash"># 已经配置~/.kube/config,则不用配置以下两个

export KUBECONFIG=/etc/kubernetes/admin.kubeconfig

export DATASTORE_TYPE=kubernetes</code></pre>

<pre><code class="language-bash">[root@k8s-master01 ~]# calicoctl get nodes

NAME

k8s-master01

k8s-master02

k8s-master03

k8s-node01

k8s-node02

[root@k8s-master01 ~]# calicoctl get ippool -o wide

NAME CIDR NAT IPIPMODE VXLANMODE DISABLED DISABLEBGPEXPORT SELECTOR

default-ipv4-ippool 172.16.0.0/12 true Always Never false false all()

[root@k8s-master01 ~]# calicoctl node status

Calico process is running.

IPv4 BGP status

+---------------+-------------------+-------+----------+-------------+

| PEER ADDRESS | PEER TYPE | STATE | SINCE | INFO |

+---------------+-------------------+-------+----------+-------------+

| 192.168.77.92 | node-to-node mesh | up | 11:10:04 | Established |

| 192.168.77.93 | node-to-node mesh | up | 11:10:04 | Established |

| 192.168.77.94 | node-to-node mesh | up | 13:00:34 | Established |

| 192.168.77.95 | node-to-node mesh | up | 11:10:05 | Established |

+---------------+-------------------+-------+----------+-------------+

</code></pre>

<h2>安装CoreDNS</h2>

<pre><code class="language-bash">git clone https://github.com/coredns/deployment.git

cd deployment/kubernetes

./deploy.sh -s -i 10.96.0.10 | kubectl apply -f -</code></pre>

<p>```bash

[root@k8s-master01 kubernetes]# kubectl get po -n kube-system -l k8s-app=kube-dns

NAME READY STATUS RESTARTS AGE

coredns-6f4b4bd8fb-v26qh 1/1 Running 0 47s</p>

<pre><code>

## 安装Metrics-server

同步证书到所有node节点

```bash

for i in k8s-node01 k8s-node02;do

scp /opt/pki/k8s/front-proxy-ca.pem $i:/opt/pki/k8s/

donemkdir ~/metrics-server && cd ~/metrics-server

cat > metrics-server.yaml << EOF

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

k8s-app: metrics-server

name: metrics-server

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

k8s-app: metrics-server

rbac.authorization.k8s.io/aggregate-to-admin: "true"

rbac.authorization.k8s.io/aggregate-to-edit: "true"

rbac.authorization.k8s.io/aggregate-to-view: "true"

name: system:aggregated-metrics-reader

rules:

- apiGroups:

- metrics.k8s.io

resources:

- pods

- nodes

verbs:

- get

- list

- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

k8s-app: metrics-server

name: system:metrics-server

rules:

- apiGroups:

- ""

resources:

- pods

- nodes

- nodes/stats

- namespaces

- configmaps

verbs:

- get

- list

- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

labels:

k8s-app: metrics-server

name: metrics-server-auth-reader

namespace: kube-system

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: extension-apiserver-authentication-reader

subjects:

- kind: ServiceAccount

name: metrics-server

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

labels:

k8s-app: metrics-server

name: metrics-server:system:auth-delegator

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:auth-delegator

subjects:

- kind: ServiceAccount

name: metrics-server

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

labels:

k8s-app: metrics-server

name: system:metrics-server

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:metrics-server

subjects:

- kind: ServiceAccount

name: metrics-server

namespace: kube-system

---

apiVersion: v1

kind: Service

metadata:

labels:

k8s-app: metrics-server

name: metrics-server

namespace: kube-system

spec:

ports:

- name: https

port: 443

protocol: TCP

targetPort: https

selector:

k8s-app: metrics-server

---

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

k8s-app: metrics-server

name: metrics-server

namespace: kube-system

spec:

selector:

matchLabels:

k8s-app: metrics-server

strategy:

rollingUpdate:

maxUnavailable: 0

template:

metadata:

labels:

k8s-app: metrics-server

spec:

containers:

- args:

- --cert-dir=/tmp

- --secure-port=4443

- --kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname

- --kubelet-use-node-status-port

- --metric-resolution=15s

- --kubelet-insecure-tls

- --requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.pem # change to front-proxy-ca.crt for kubeadm

- --requestheader-username-headers=X-Remote-User

- --requestheader-group-headers=X-Remote-Group

- --requestheader-extra-headers-prefix=X-Remote-Extra-

image: registry.aliyuncs.com/google_containers/metrics-server:v0.5.0

imagePullPolicy: IfNotPresent

livenessProbe:

failureThreshold: 3

httpGet:

path: /livez

port: https

scheme: HTTPS

periodSeconds: 10

name: metrics-server

ports:

- containerPort: 4443

name: https

protocol: TCP

readinessProbe:

failureThreshold: 3

httpGet:

path: /readyz

port: https

scheme: HTTPS

initialDelaySeconds: 20

periodSeconds: 10

resources:

requests:

cpu: 100m

memory: 200Mi

securityContext:

readOnlyRootFilesystem: true

runAsNonRoot: true

runAsUser: 1000

volumeMounts:

- mountPath: /tmp

name: tmp-dir

- name: ca-ssl

mountPath: /etc/kubernetes/pki

nodeSelector:

kubernetes.io/os: linux

priorityClassName: system-cluster-critical

serviceAccountName: metrics-server

volumes:

- emptyDir: {}

name: tmp-dir

- name: ca-ssl

hostPath:

path: /etc/kubernetes/pki

---

apiVersion: apiregistration.k8s.io/v1

kind: APIService

metadata:

labels:

k8s-app: metrics-server

name: v1beta1.metrics.k8s.io

spec:

group: metrics.k8s.io

groupPriorityMinimum: 100

insecureSkipTLSVerify: true

service:

name: metrics-server

namespace: kube-system

version: v1beta1

versionPriority: 100

EOF

kubectl apply -f metrics-server.yaml[root@k8s-master01 ~]# kubectl top node

NAME CPU(cores) CPU% MEMORY(bytes) MEMORY%

k8s-master01 164m 8% 1232Mi 65%

k8s-master02 149m 7% 1210Mi 64%

k8s-master03 161m 8% 1197Mi 63%

k8s-node01 64m 6% 867Mi 46%

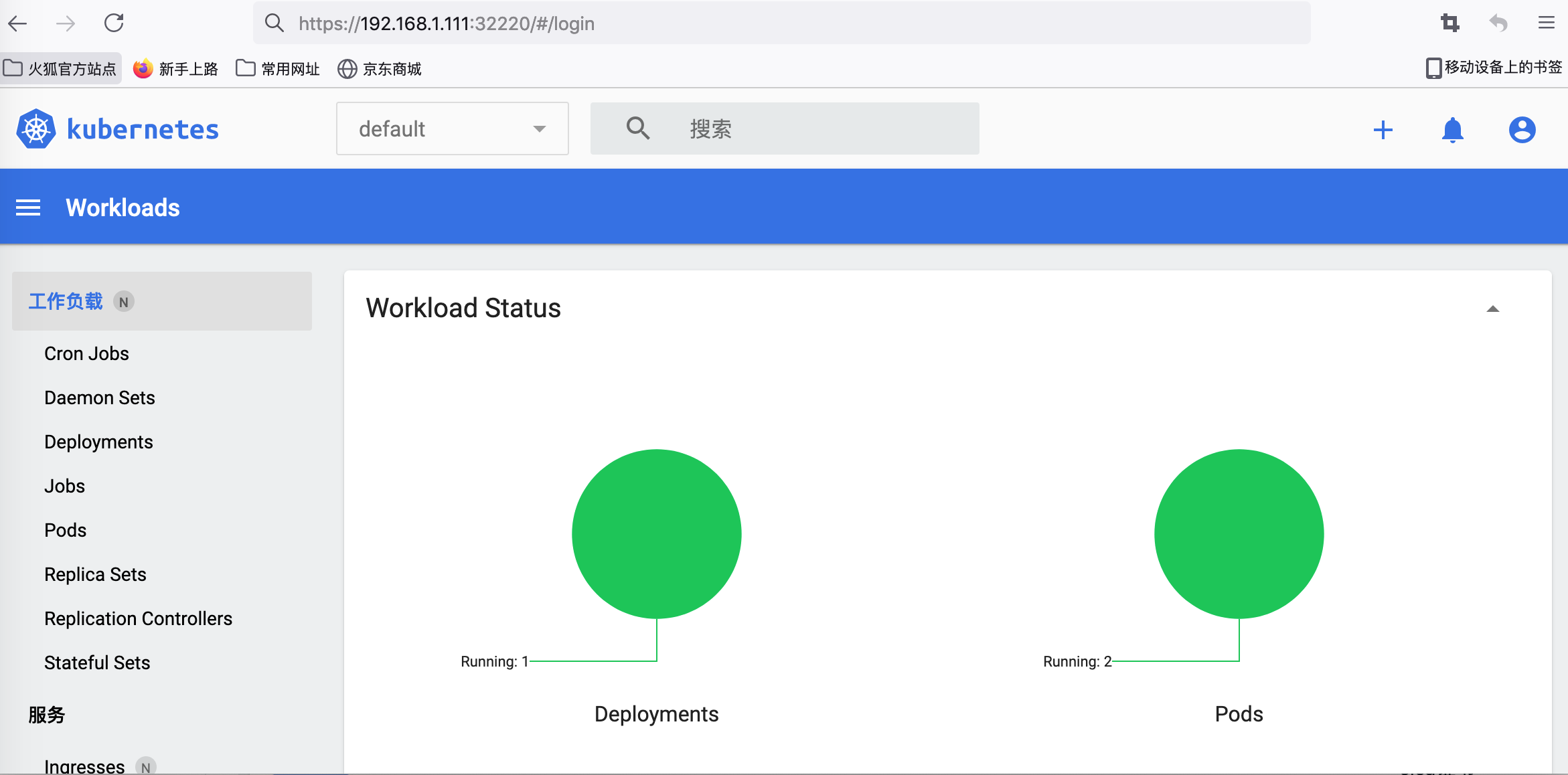

k8s-node02 63m 6% 1230Mi 65% 安装Dashboard

wget https://raw.githubusercontent.com/kubernetes/dashboard/v2.7.0/aio/deploy/recommended.yaml

# 默认镜像在docker.io/ubernetesui/dashboard 默认国内加速即可

# registry.aliyuncs.com/google_containers:v2.7.0 有问题报错exec /dashboard: exec format error,应该是拉错架构了

kubectl apply -f recommended.yamlcat > dashboard-user.yaml << EOF

apiVersion: v1

kind: ServiceAccount

metadata:

name: admin-user

namespace: kubernetes-dashboard

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: admin-user

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: admin-user

namespace: kubernetes-dashboard

EOF

kubectl apply -f dashboard-user.yaml更改dashboard的svc为NodePort

[root@k8s-master01 ~]# kubectl edit svc kubernetes-dashboard -n kubernetes-dashboard

...

sessionAffinity: None

type: NodePort # 将Cluster为NodePort

status:

loadBalancer: {}[root@k8s-master01 ~]# kubectl get svc kubernetes-dashboard -n kubernetes-dashboard

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

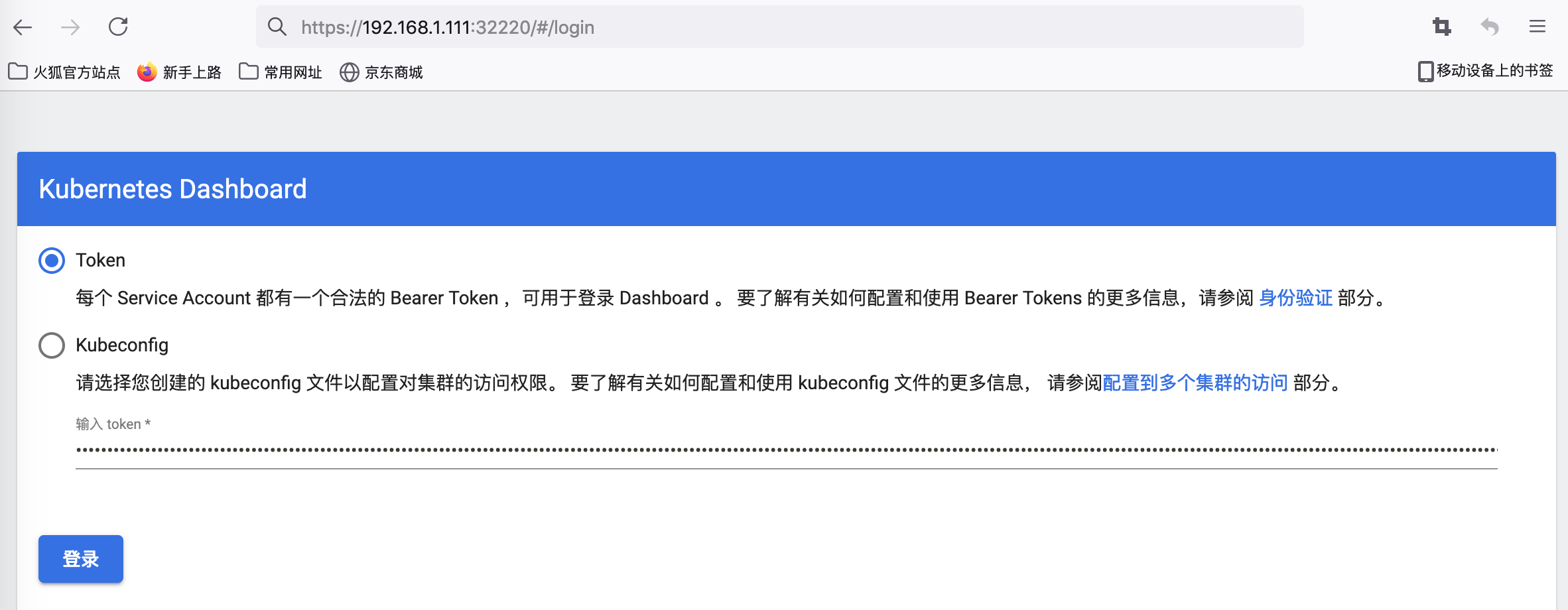

kubernetes-dashboard NodePort 10.101.102.61 <none> 443:32220/TCP 2m54s创建token访问

kubectl -n kubernetes-dashboard create token admin-user复制生成的token: yJhbGciOiJSUzI1NiIsImtpZCI6ImVvQzxxx...

使用火狐浏览器访问 https://vip:NodePort

(谷歌浏览器不能登陆,需修改dashboard证书)

集群验证

[root@k8s-master01 ~]# kubectl get cs

Warning: v1 ComponentStatus is deprecated in v1.19+

NAME STATUS MESSAGE ERROR

scheduler Healthy ok

etcd-2 Healthy {"health":"true","reason":""}

controller-manager Healthy ok

etcd-1 Healthy {"health":"true","reason":""}

etcd-0 Healthy {"health":"true","reason":""}

[root@k8s-master01 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master01 Ready <none> 19h v1.24.6

k8s-master02 Ready <none> 17h v1.24.6

k8s-master03 Ready <none> 17h v1.24.6

k8s-node01 Ready <none> 17h v1.24.6

k8s-node02 Ready <none> 17h v1.24.6busybox测试dns

cat <<EOF | kubectl apply -f -

apiVersion: v1

kind: Pod

metadata:

name: busybox

namespace: default

spec:

containers:

- name: busybox

image: busybox:1.28

command:

- sleep

- "3600"

imagePullPolicy: IfNotPresent

restartPolicy: Always

EOF[root@k8s-master01 ~]# kubectl exec -it busybox -- nslookup kubernetes

Server: 10.96.0.10

Address 1: 10.96.0.10 kube-dns.kube-system.svc.cluster.local

Name: kubernetes

Address 1: 10.96.0.1 kubernetes.default.svc.cluster.local

[root@k8s-master01 ~]# kubectl exec -it busybox -- ping -c2 www.baidu.com

PING www.baidu.com (14.215.177.38): 56 data bytes

64 bytes from 14.215.177.38: seq=0 ttl=49 time=9.445 ms

64 bytes from 14.215.177.38: seq=1 ttl=49 time=9.524 ms测试nginx svc以及Pod内部网络通信是否正常

cat > nginx_deploy.yaml << EOF

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx

spec:

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- image: nginx:alpine

name: nginx

ports:

- containerPort: 80

---

apiVersion: v1

kind: Service

metadata:

name: nginx

spec:

selector:

app: nginx

type: NodePort

ports:

- protocol: TCP

port: 80

targetPort: 80

nodePort: 30001

EOF

kubectl apply -f nginx_deploy.yaml[root@k8s-master01 ~]# kubectl get svc,pod -owide

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR

service/kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 45h <none>

service/nginx NodePort 10.102.100.128 <none> 80:30001/TCP 16m app=nginx

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

pod/busybox 1/1 Running 0 24m 172.18.195.3 k8s-master03 <none> <none>

pod/nginx-6fb79bc456-s84wf 1/1 Running 0 16m 172.25.92.66 k8s-master02 <none> <none>service_ip=10.102.100.128

pod_ip=172.25.92.66

for i in k8s-master01 k8s-master02 k8s-master03 k8s-node01 k8s-node02; do

echo $i

ssh sunday@$i curl -s $service_ip | grep "using nginx" # nginx svc ip

ssh sunday@$i curl -s $pod_ip | grep "using nginx" # pod ip

done访问宿主机vip:nodePort

[root@k8s-master01 ~]# curl -I 192.168.77.100:30001

HTTP/1.1 200 OK

Server: nginx/1.23.2

Date: Thu, 20 Oct 2022 12:22:07 GMT

Content-Type: text/html

Content-Length: 615

Last-Modified: Wed, 19 Oct 2022 10:28:53 GMT

Connection: keep-alive

ETag: "634fd165-267"

Accept-Ranges: bytes安装命令行补全

yum install bash-completion -y

source /usr/share/bash-completion/bash_completion

source <(kubectl completion bash)

echo "source <(kubectl completion bash)" >> ~/.bashrc参考

腾讯云Ubuntu二进制搭建高可用(k8s)Kubernetes v1.24.3集群

企业级高可用Kubernetes1.20.x集群(二进制)

SundayHK

SundayHK